Chapter 9: Testing for a Difference: Multiple Related Samples

9.1 The reason that nine out of ten trials might be used as the criterion for mastering the maze is that nine out of ten has a probability of less than 0.05 (two-tailed test). This is a binomial test when the probability of success is 0.5 because the hamster has a choice between two doors at the end of the T-maze. If the hamster were randomly choosing a door, the probability of success would be 0.5. Correctly choosing the door where the food is nine out of ten times has a probability less than 0.05 and you can reject the null hypothesis that the hamster is randomly choosing a door. Of course, there is always a possibility of a Type I error.

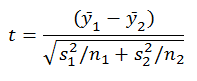

9.4 Effectively, both the single-sample and the paired-samples t-tests compare a single sample mean against a known population mean. After calculating the d-scores for a paired-samples design, you have only one score per subject. You are back to a single-sample t-test. You can forget the scores in the two original conditions for the moment. The analysis is now analogous to one where there is a known population mean and an unknown variance. The question here is framed as follows: Is the difference between the observed mean of the d-scores and the expected mean of the d-scores (0.0) greater than what we would expect due to chance alone? The expected mean of the d-scores (0.0) serves as the known population mean. Similarly to the single-sample t-test in which you estimate the population variance with the sample variance, in the paired-samples test you use the variance in the d-scores to estimate the variance in the population of d-scores.

9.5 There are two ways for t-values to change when the condition means remain constant. With either a decrease in the variance or an increase in n, the t-value will increase. With either an increase in the variance or a decrease in n, the t-value will decrease. In the first case the denominator of the test is decreased, thus the t-value is increased. In the second case, the denominator is increased, thus the t-value is decreased.

9.6 The absolute values of the two block means in the two figures are different, but the difference between them is the same (10). The reason for this is that the condition means have been subtracted from the scores in each condition. This has removed both the grand mean and the treatment effect from all of the scores, leaving only the effect of block and error.

9.7 The easiest way to solve this problem is to begin at the end and work backwards. The original F-value (α = 0.05) for a test with 2 dftreatment and 9 dferror was 0.982. Clearly, if the block accounted for no sum of squares, your power would be reduced. There would be one less dferror but no reduction in the SSerror. Thus the MSerror would be 41.25 and the resulting F-value would be reduced to 0.872. The challenge question can be restated, however, as how many SSblock can be present without an increase in power? If you divide the SSerror by the df, you find that each df is associated with 36.666 SSerror. If you subtract 36.666 from 330, you have a new SSerror of 293.334. Remembering that you lose 1 df to the blocking variable, you now have 8 dferror. Thus the new MSerror is 36.667 (293.334/ 8), the same as the original. With 2 dftreatment and 8 dferror the new critical F-value for significance is 4.74, where the critical value for 2 and 9 df was 4.46. Thus, your observed F-value of 0.982 is now further from the critical value, which represents a loss of power. This illustrates the trade-off of degrees of freedom for sum of squares that accompanies a randomized block design. It only pays to include a blocking variable which is known to account for a substantial amount of the sum of squares in the dependent measure.

9.8 Assuming that α is held constant at 0.05, the three principal factors that influence power are the size of the differences between or among the means, the variance within the conditions, and the number of observations or n. As the differences between or among the means increase, power increases. The differences (variance) among means is the basis for MStreatment, the numerator of the F-ratio. As the average variance within the conditions decreases, power increases. The average within-condition variance is MSerror, the denominator of the F-ratio. As the number of observations increases, dferror increases. As dferror increases, the critical value of F decreases.

9.9 The SStotal is the sum of the squared differences of all the observations about the grand mean. The partitioning of these squared differences may change, but, regardless of the design, this sum or total cannot change.

9.10 The noun recognition data are best understood as being ratio in nature. You can assume that the intervals are equal, and that the differences between any pair of adjacent possible scores are the same. Because it is possible for a subject to fail to recognize any of the nouns correctly, a score of 0 (true zero) is possible. Thus, the data are best treated as ratio in nature.